OVERVIEW

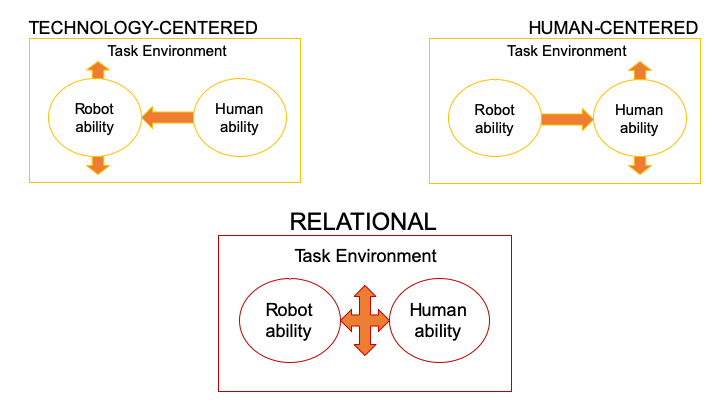

We conduct research in the field and in simulated environments, apply systems thinking to human-automation integration problems, explore dyadic exchanges between humans and technology, and use mixed methods to improve technology integration in high-criticality work settings. Advances in automation have led to increasingly capable machines, from adaptive algorithms to embodied social agents. Instead of operating remotely behind safety cages, new automation is moving into our more unpredictable human world. These changes can shift system goals from reliability to resilience, which we take to be the sustained ability to adapt to future surprises as conditions evolve. Our preliminary findings indicate the importance of considering social exchange factors in human-automation interaction and the need for human-agent cooperation to support system resilience.

Human-agent Cooperation to Support System Resilience

From self-driving vehicles to sophisticated decision support systems automation is being built to be increasingly autonomous. Computational advances have led to machines capable of automating whole functions that previously required human operation or intervention at various steps along the way. As such, workers and machines are entering into increasingly coordinative and cooperative relationships, in contexts where successful interactions demand teamwork. Resilience Engineering approaches the design of such systems as consisting of interactive agents (human or machine), collaborating to achieve shared goals in dynamic and high-criticality or safety-sensitive environments. Recent projects study human-agent cooperation on dynamic and uncertain tasks, using simulated microworld environments.

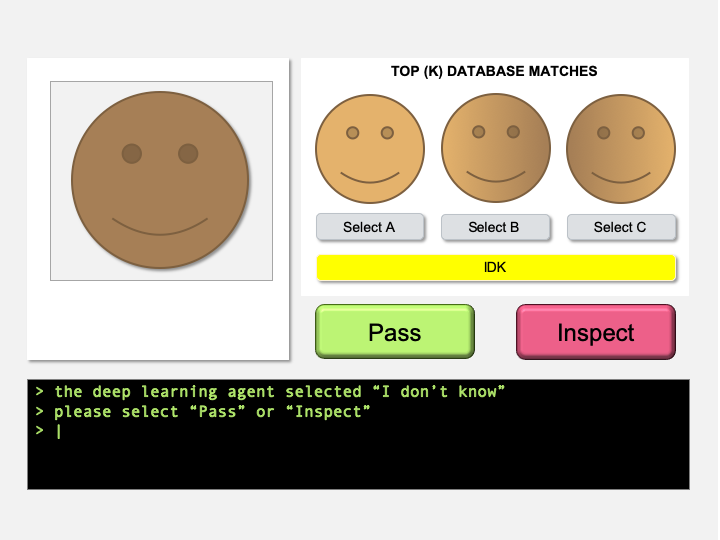

Human-AI Joint Decision Making

Artificial intelligence (AI) technologies are being deployed in national security, defense, and criminal justice. Previous work has shown that some of these technologies should not be fully automated in critical tasks with ethical and legal considerations. AI should therefore defer decisions to a human when it cannot predict accurately or fairly. The goal of understanding human-AI decision-making is to balance the benefits of algorithmic automated decisions with the benefits of human intelligence and discernment. Recent projects study an AI-enabled face recognition system that under certain conditions defers identification of travelers to a human security officer. Our goal is to understand the factors that affect overall system performance and the broader social impact of such systems.

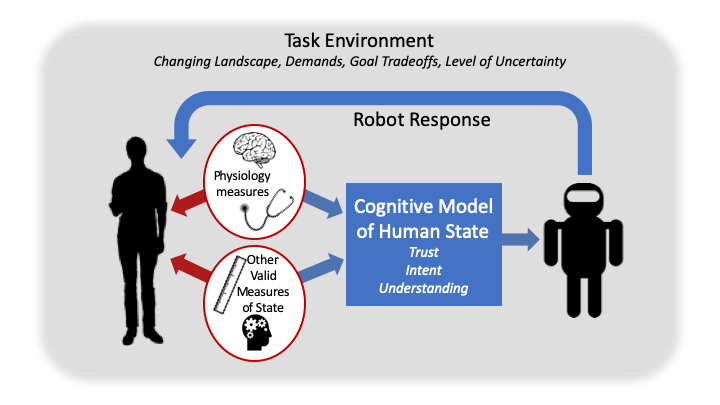

Trust in Automation

Trust in automation has emerged as a concern in wide-ranging domains, including automotive research, virtual agents, healthcare, and military applications. Past research focused on appropriately calibrated trust, reliance and compliance, in line with system capabilities. As our relationship with automation shifts from supervisory to collaborative, understanding trust in automation is important in situations where cooperation between human-agent team members is needed. In such cases, separating appropriate distrust and inappropriate suspicion depends on social affordances and signals of a shared purpose rather than perceptions of reliability or dependability. Recent projects study trust in virtual humans and automated agents in the domains of education, healthcare, and national security.

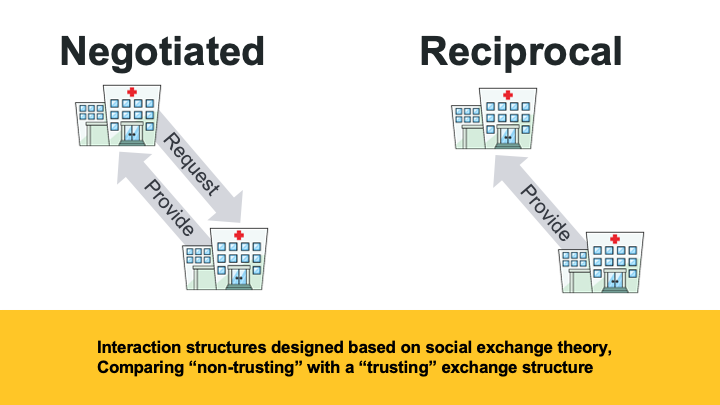

Social Exchange Factors in Human-Automation Interaction

Social Exchange Theory treats interactions between agents as transactions similar to exchanges of goods. These transactions communicate actions that shape perceptions of an exchange partner and interpretation of the partner’s subsequent actions. This has important implications for teams of people and automated agents interacting over time in a complex work environment, where shared goals may not be attained without first reconciling potentially conflicting local goals. When people and automation must work together to achieve a shared goal in these environments, the structures of those interactions may inadvertently impact perceptions and interpretations of intent and subsequent actions, and impact joint system performance. Recent projects study effects of reciprocal and negotiated social exchange structures between people and an automated agent in a shared resource management task.

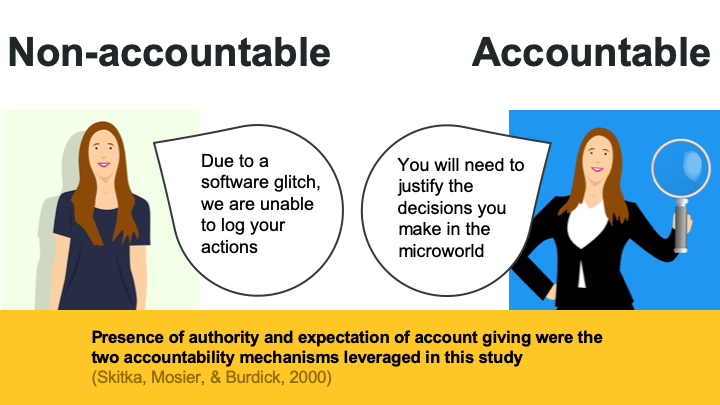

Accountability in Sociotechnical Systems

Accountability in sociotechnical systems is the pressure to attend to more information, and employ multi-dimensional information processing strategies to identify appropriate responses. The goal of understanding accountability in sociotechnical systems is to balance the benefits of autonomy with the benefits of control in system design and performance. This research program impacts the future of work and human empowerment. Recent projects study accountability in increasingly automated or proceduralized environments in the domains of homeland security and healthcare.

Contextual Design in Healthcare and Transportation

With the Cognitive Systems Laboratory (CSL), Contextual Design methods were used to develop information management and decision-making devices that aid older adults in daily activities such as medication management and transportation. Both projects were conducted in collaboration with The Center for Health Enhancement Systems Studies.

Image: Affinity diagram from the Contextual Design process.

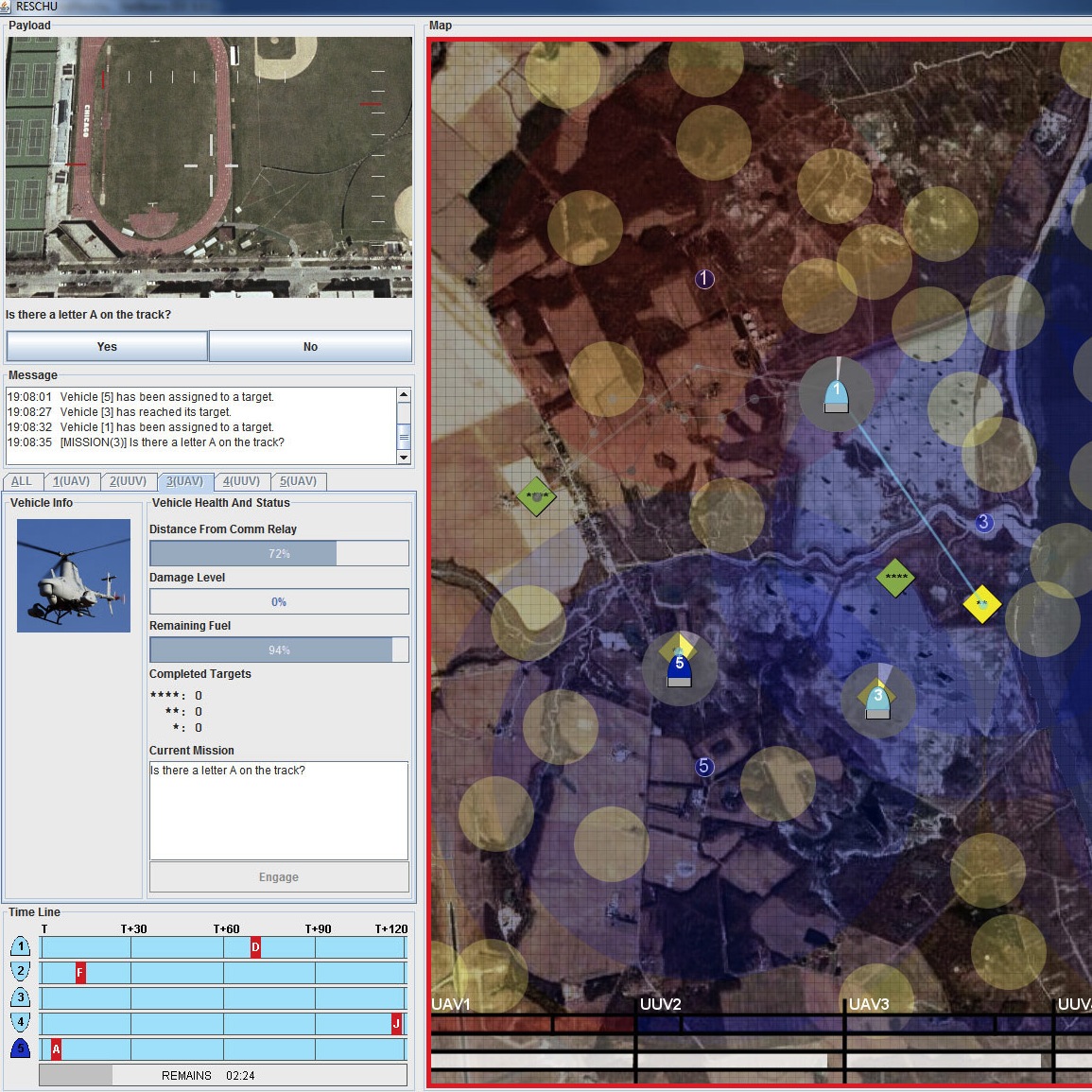

Microworld Lab Study of Supervisory Control Automation

With the Cognitive Systems Laboratory (CSL), and the MIT Humans and Automation Lab, microworld studies were conducted to examine people’s reliance on and compliance with automation at varying levels of transparency in supervisory control of heterogeneous unmanned vehicles.

Image: Screenshot of the micro-world environment used to study trust, human performance, and different types of automation.

Ethnographic Field Studies in Healthcare

In collaboration with Enid Montague’s Human Computer Interaction Lab at UW-Madison, later the WHEEL Lab at Northwestern, projects related to consumer health IT, trust in smart medical devices, and electronic health record workflow during primary care visits and the care delivery process were conducted. In the summer of 2012, a field study project with NYU collaborators explored design guidelines for a shared decision-making device.

Image: Video-observation screenshot of a simulated doctor-patient visit.